2.SparkSQL 编程

2.1 SparkSession 新的起始点

在老的版本中,SparkSQL 提供两种 SQL 查询起始点:一个叫 SQLContext,用于 Spark 自己

提供的 SQL 查询;一个叫 HiveContext,用于连接 Hive 的查询。

SparkSession 是 Spark 最新的 SQL 查询起始点,实质上是 SQLContext 和 HiveContext 的组

合,所以在 SQLContext 和 HiveContext 上可用的 API 在 SparkSession 上同样是可以使用的。

SparkSession 内部封装了 sparkContext,所以计算实际上是由 sparkContext 完成的。

2.2 DataFrame

2.2.1 创建

在 Spark SQL 中 SparkSession 是创建 DataFrame 和执行 SQL 的入口,创建 DataFrame 有三种

方式:通过 Spark 的数据源进行创建;从一个存在的 RDD 进行转换;还可以从 Hive Table 进行查

询返回。

1)从 Spark 数据源进行创建

(1)查看 Spark 数据源进行创建的文件格式

scala> spark.read.

csv format jdbc json load option options orc parquet schema table text textFile

(2)读取 json 文件创建 DataFrame

scala> val df = spark.read.json("/opt/module/spark/examples/src/main/resources/people.json") df: org.apache.spark.sql.DataFrame = [age: bigint, name: string]

(3)展示结果

scala> df.show +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+

2)从 RDD 进行转换

2.5 节我们专门讨论

3)从 Hive Table 进行查询返回

3.3 节我们专门讨论

2.2.2 SQL 风格语法(主要)

1)创建一个 DataFrame

scala> val df = spark.read.json("/opt/module/spark/examples/src/main/resources/people.json") df: org.apache.spark.sql.DataFrame = [age: bigint, name: string]

2)对 DataFrame 创建一个临时表

scala> df.createOrReplaceTempView("people")

3)通过 SQL 语句实现查询全表

scala> val sqlDF = spark.sql("SELECT * FROM people") sqlDF: org.apache.spark.sql.DataFrame = [age: bigint, name: string]

4)结果展示

scala> sqlDF.show +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+

注意:临时表是 Session 范围内的,Session 退出后,表就失效了。如果想应用范围内有效,可以

使用全局表。注意使用全局表时需要全路径访问,如:global_temp.people

5)对于 DataFrame 创建一个全局表

scala> df.createGlobalTempView("people")

6)通过 SQL 语句实现查询全表

scala> spark.sql("SELECT * FROM global_temp.people").show() +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| scala> spark.newSession().sql("SELECT * FROM global_temp.people").show() +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+

2.2.3 DSL 风格语法(次要)

1)创建一个 DataFrame

scala> val df = spark.read.json("/opt/module/spark/examples/src/main/resources/people.json")

df: org.apache.spark.sql.DataFrame = [age: bigint, name: string]

2)查看 DataFrame 的 Schema 信息

scala> df.printSchema root |-- age: long (nullable = true) |-- name: string (nullable = true)

3)只查看”name”列数据

scala> df.select("name").show() +-------+ | name| +-------+ |Michael| | Andy| | Justin| +-------+

4)查看”name”列数据以及”age+1”数据

scala> df.select($"name", $"age" + 1).show() +-------+---------+ | name|(age + 1)| +-------+---------+ |Michael| null| | Andy| 31| | Justin| 20| +-------+---------+

5)查看”age”大于”21”的数据

scala> df.filter($"age" > 21).show() +---+----+ |age|name| +---+----+ | 30|Andy| +---+----+

6)按照”age”分组,查看数据条数

scala> df.groupBy("age").count().show() +----+-----+ | age|count| +----+-----+ | 19| 1| |null| 1| | 30| 1| +----+-----+

测试:

scala> spark.read. csv jdbc load options parquet table textFile format json option orc schema text scala> spark.read.json("./examples/src/main/resources/people.json") res0: org.apache.spark.sql.DataFrame = [age: bigint, name: string] scala> res0.collect res1: Array[org.apache.spark.sql.Row] = Array([null,Michael], [30,Andy], [19,Justin]) scala> res0.show +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+ scala> res0.select("name").show +-------+ | name| +-------+ |Michael| | Andy| | Justin| +-------+ scala> res0.select("age","name").show +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+ scala> res0.select($"age"+1).show +---------+ |(age + 1)| +---------+ | null| | 31| | 20| +---------+ scala> res0.select($"age"+1,$"name").show +---------+-------+ |(age + 1)| name| +---------+-------+ | null|Michael| | 31| Andy| | 20| Justin| +---------+-------+ scala> res0.select($"name").show +-------+ | name| +-------+ |Michael| | Andy| | Justin| +-------+ scala> res0.first res9: org.apache.spark.sql.Row = [null,Michael] scala> res0.filter($"age">20).show +---+----+ |age|name| +---+----+ | 30|Andy| +---+----+ scala> res0.create createGlobalTempView createOrReplaceTempView createTempView scala> res0.createTempView("people") scala> spark.sql("select * from people").show +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+ scala> spark.sql("select * from people where age > 20").show +---+----+ |age|name| +---+----+ | 30|Andy| +---+----+

2.2.4 RDD 转换为 DateFrame

注意:如果需要 RDD 与 DF 或者 DS 之间操作,那么都需要引入 import spark.implicits._ 【spark

不是包名,而是 sparkSession 对象的名称】

前置条件:导入隐式转换并创建一个 RDD

scala> import spark.implicits._ import spark.implicits._

scala> val peopleRDD = sc.textFile("examples/src/main/resources/people.txt") peopleRDD: org.apache.spark.rdd.RDD[String] = examples/src/main/resources/people.txt MapPartitionsRDD[3] at textFile at <console>:27

1)通过手动确定转换

scala> peopleRDD.map{x=>val para = x.split(",");(para(0),para(1).trim.toInt)}.toDF("name","age")

res1: org.apache.spark.sql.DataFrame = [name: string, age: int]

2)通过反射确定(需要用到样例类)

(1)创建一个样例类

scala> case class People(name:String, age:Int)

(2)根据样例类将 RDD 转换为 DataFrame

scala> peopleRDD.map{ x => val para = x.split(",");People(para(0),para(1).trim.toInt)}.toDF

res2: org.apache.spark.sql.DataFrame = [name: string, age: int]

3)通过编程的方式(了解)

(1)导入所需的类型

scala> import org.apache.spark.sql.types._

import org.apache.spark.sql.types._

(2)创建 Schema

scala> val structType: StructType = StructType(StructField("name", StringType) :: StructField("age", IntegerType) :: Nil) structType: org.apache.spark.sql.types.StructType = StructType(StructField(name,StringType,true), StructField(age,IntegerType,true))

(3)导入所需的类型

scala> import org.apache.spark.sql.Row

import org.apache.spark.sql.Row

(4)根据给定的类型创建二元组 RDD

scala> val data = peopleRDD.map{ x => val para = x.split(",");Row(para(0),para(1).trim.toInt)}

data: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[6] at map at <console>:33

(5)根据数据及给定的 schema 创建 DataFrame

scala> val dataFrame = spark.createDataFrame(data, structType) dataFrame: org.apache.spark.sql.DataFrame = [name: string, age: int]

2.2.5 DateFrame 转换为 RDD

直接调用 rdd 即可

1)创建一个 DataFrame

scala> val df = spark.read.json("/opt/module/spark/examples/src/main/resources/people.json")

df: org.apache.spark.sql.DataFrame = [age: bigint, name: string]

2)将 DataFrame 转换为 RDD

scala> val dfToRDD = df.rdd dfToRDD: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[19] at rdd at <console>:29

3)打印 RDD

scala> dfToRDD.collect res13: Array[org.apache.spark.sql.Row] = Array([Michael, 29], [Andy, 30], [Justin, 19])

2.3 DataSet

Dataset 是具有强类型的数据集合,需要提供对应的类型信息。

2.3.1 创建

1)创建一个样例类

scala> case class Person(name: String, age: Long) defined class Person

2)创建 DataSet

scala> val caseClassDS = Seq(Person("Andy", 32)).toDS() caseClassDS: org.apache.spark.sql.Dataset[Person] = [name: string, age: bigint]

2.3.2 RDD 转换为 DataSet

SparkSQL 能够自动将包含有 case 类的 RDD 转换成 DataFrame,case 类定义了 table 的结构,

case 类属性通过反射变成了表的列名。Case 类可以包含诸如 Seqs 或者 Array 等复杂的结构。

1)创建一个 RDD

scala> val peopleRDD = sc.textFile("examples/src/main/resources/people.txt")

peopleRDD: org.apache.spark.rdd.RDD[String] = examples/src/main/resources/people.txt MapPartitionsRDD[3] at textFile at <console>:27

2)创建一个样例类

scala> case class Person(name: String, age: Long) defined class Person

3)将 RDD 转化为 DataSet

scala> peopleRDD.map(line => {val para = line.split(",");Person(para(0),para(1).trim.toInt)}).toDS

res8: org.apache.spark.sql.Dataset[Person] = [name: string, age: bigint]

2.3.3 DataSet 转换为 RDD

调用 rdd 方法即可。

1)创建一个 DataSet

scala> val DS = Seq(Person("Andy", 32)).toDS() DS: org.apache.spark.sql.Dataset[Person] = [name: string, age: bigint]

2)将 DataSet 转换为 RDD

scala> DS.rdd res11: org.apache.spark.rdd.RDD[Person] = MapPartitionsRDD[15] at rdd at <console>:28

2.4 DataFrame 与 DataSet 的互操作

2.4.1 DataFrame 转 Dataset

1)创建一个 DateFrame

scala> val df = spark.read.json("examples/src/main/resources/people.json") df: org.apache.spark.sql.DataFrame = [age: bigint, name: string]

2)创建一个样例类

scala> case class Person(name: String, age: Long) defined class Person

3)将 DateFrame 转化为 DataSet

scala> df.as[Person] res14: org.apache.spark.sql.Dataset[Person] = [age: bigint, name: string]

这种方法就是在给出每一列的类型后,使用 as 方法,转成 Dataset,这在数据类型是 DataFrame 又

需 要 针 对 各 个 字 段 处 理 时 极 为 方 便 。 在 使 用 一 些 特 殊 的 操 作 时 , 一 定 要 加 上 import

spark.implicits._ 不然 toDF、toDS 无法使用。

2.4.2 Dataset 转 DataFrame

1)创建一个样例类

scala> case class Person(name: String, age: Long) defined class Person

2)创建 DataSet

scala> val ds = Seq(Person("Andy", 32)).toDS() ds: org.apache.spark.sql.Dataset[Person] = [name: string, age: bigint]

3)将 DataSet 转化为 DataFrame

scala> val df = ds.toDF df: org.apache.spark.sql.DataFrame = [name: string, age: bigint]

4)展示

scala> df.show +----+---+ |name|age| +----+---+ |Andy| 32| +----+---+

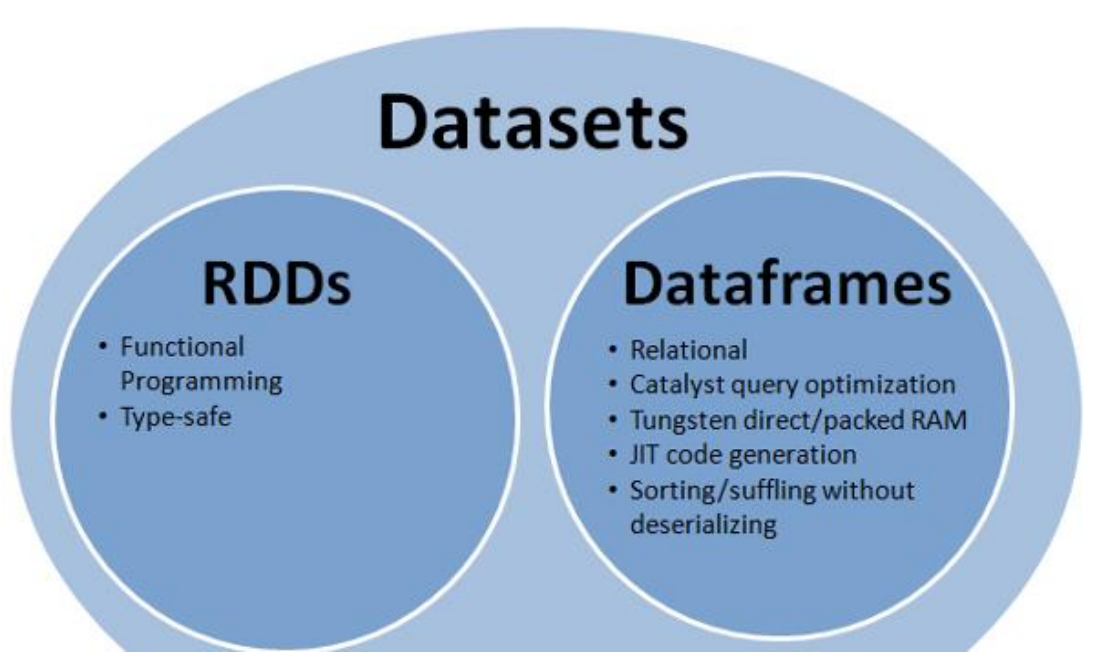

2.5 RDD、DataFrame、DataSet

在 SparkSQL 中 Spark 为我们提供了两个新的抽象,分别是 DataFrame 和 DataSet。他们和

RDD 有什么区别呢?首先从版本的产生上来看:

RDD (Spark1.0) —> Dataframe(Spark1.3) —> Dataset(Spark1.6)

如果同样的数据都给到这三个数据结构,他们分别计算之后,都会给出相同的结果。不同

是的他们的执行效率和执行方式。

在后期的 Spark 版本中,DataSet 会逐步取代 RDD 和 DataFrame 成为唯一的 API 接口。

2.5.1 三者的共性

1、RDD、DataFrame、Dataset 全都是 spark 平台下的分布式弹性数据集,为处理超大型数据

提供便利

2、三者都有惰性机制,在进行创建、转换,如 map 方法时,不会立即执行,只有在遇到 Action

如 foreach 时,三者才会开始遍历运算。

3、三者都会根据 spark 的内存情况自动缓存运算,这样即使数据量很大,也不用担心会内存

溢出

4、三者都有 partition 的概念

5、三者有许多共同的函数,如 filter,排序等

6、在对 DataFrame 和 Dataset 进行操作许多操作都需要这个包进行支持

import spark.implicits._

7、DataFrame 和 Dataset 均可使用模式匹配获取各个字段的值和类型

DataFrame:

testDF.map{ case Row(col1:String,col2:Int)=> println(col1);println(col2) col1 case _=> "" }

Dataset:

case class Coltest(col1:String,col2:Int)extends Serializable //定义字段 名和类型 testDS.map{ case Coltest(col1:String,col2:Int)=> println(col1);println(col2) col1 case _=> "" }

2.5.2 三者的区别

1. RDD:

1)RDD 一般和 spark mlib 同时使用

2)RDD 不支持 sparksql 操作

2. DataFrame:

1)与 RDD 和 Dataset 不同,DataFrame 每一行的类型固定为 Row,每一列的值没法直接访

问,只有通过解析才能获取各个字段的值,如:

testDF.foreach{ line => val col1=line.getAs[String]("col1") val col2=line.getAs[String]("col2") }

2)DataFrame 与 Dataset 一般不与 spark mlib 同时使用

3)DataFrame 与 Dataset 均支持 sparksql 的操作,比如 select,groupby 之类,还能注册临时

表/视窗,进行 sql 语句操作,如:

dataDF.createOrReplaceTempView("tmp") spark.sql("select ROW,DATE from tmp where DATE is not null order by DATE").show(100,false)

4)DataFrame 与 Dataset 支持一些特别方便的保存方式,比如保存成 csv,可以带上表头,这

样每一列的字段名一目了然

//保存 val saveoptions = Map("header" -> "true", "delimiter" -> " ", "path" -> "hdfs://hadoop102:9000/test") datawDF.write.format("com.atguigu.spark.csv").mode(SaveMode.Overwrite).options(saveoptions).save()

//读取 val options = Map("header" -> "true", "delimiter" -> " ", "path" -> "hdfs://hadoop102:9000/test") val datarDF= spark.read.options(options).format("com.atguigu.spark.csv").load()

利用这样的保存方式,可以方便的获得字段名和列的对应,而且分隔符(delimiter)可以自由指

定。

3. Dataset:

1)Dataset 和 DataFrame 拥有完全相同的成员函数,区别只是每一行的数据类型不同。

2)DataFrame 也可以叫 Dataset[Row],每一行的类型是 Row,不解析,每一行究竟有哪些字

段,各个字段又是什么类型都无从得知,只能用上面提到的 getAS 方法或者共性中的第七条提到

的模式匹配拿出特定字段。而 Dataset 中,每一行是什么类型是不一定的,在自定义了 case class

之后可以很自由的获得每一行的信息

case class Coltest(col1:String,col2:Int)extends Serializable //定义字段 名和类型 /** rdd ("a", 1) ("b", 1) ("a", 1) **/ val test: Dataset[Coltest]=rdd.map{line=> Coltest(line._1,line._2) }.toDS test.map{ line=> println(line.col1) println(line.col2) }

可以看出,Dataset 在需要访问列中的某个字段时是非常方便的,然而,如果要写一些适配性

很强的函数时,如果使用 Dataset,行的类型又不确定,可能是各种 case class,无法实现适配,这

时候用 DataFrame 即 Dataset[Row]就能比较好的解决问题

--RDD -> DF/DS -- DF : 方式一:--rdd.map{x => val pa = x.split(","); (pa(0).trim,pa(1).trim)}.toDF("naem", "age") 方式二:--case class People(name:String,age:String) rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)}.toDF --编程动态引入: val rdd = sc.textFile("examples/src/main/resources/people.txt") val schemaString = "name age" val res29 = rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)} val fields = schemaString.split(" ").map(fieldName => StructField(fieldName, StringType, nullable = true)) val schema = StructType(fields) spark.createDataFrame(res29,schema) -- DS : case class People(name:String,age:String) rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)}.toDS

scala> rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)}.toDS

res21: org.apache.spark.sql.Dataset[People] = [name: string, age: string]

scala> rdd.map{x => val pa = x.split(","); (pa(0).trim,pa(1).trim)}.toDS

res22: org.apache.spark.sql.Dataset[(String, String)] = [_1: string, _2: string]

scala> val rdd = sc.textFile("examples/src/main/resources/people.txt") rdd: org.apache.spark.rdd.RDD[String] = examples/src/main/resources/people.txt MapPartitionsRDD[1] at textFile at <console>:24 scala> rdd.collect res1: Array[String] = Array(Michael, 29, Andy, 30, Justin, 19) scala> rdd.map{x => val pa = x.split(","); (pa(0).trim,pa(1).trim)} res2: org.apache.spark.rdd.RDD[(String, String)] = MapPartitionsRDD[2] at map at <console>:27 scala> res2.collect res3: Array[(String, String)] = Array((Michael,29), (Andy,30), (Justin,19)) scala> import spark.implicits._ import spark.implicits._ scala> res2.to toDF toDS toDebugString toJavaRDD toLocalIterator toString top scala> res2.toDF("name", "age") res5: org.apache.spark.sql.DataFrame = [name: string, age: string] scala> res5.show +-------+---+ | name|age| +-------+---+ |Michael| 29| | Andy| 30| | Justin| 19| +-------+---+ scala> case class People(name:String,age:String) defined class People scala> rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)} res8: org.apache.spark.rdd.RDD[People] = MapPartitionsRDD[6] at map at <console>:32 scala> res8.collect res10: Array[People] = Array(People(Michael,29), People(Andy,30), People(Justin,19)) scala> res8.toDS res13: org.apache.spark.sql.Dataset[People] = [name: string, age: string] scala> res13.show +-------+---+ | name|age| +-------+---+ |Michael| 29| | Andy| 30| | Justin| 19| +-------+---+ scala> res8.toDF res15: org.apache.spark.sql.DataFrame = [name: string, age: string] scala> res15.show +-------+---+ | name|age| +-------+---+ |Michael| 29| | Andy| 30| | Justin| 19| +-------+---+ --DF -> RDD/DS --RDD DF.rdd //获取值,编译期不校验类型

--case class People(name:String,age:String) DF.as[People]

scala> val rdd = sc.textFile("examples/src/main/resources/people.txt") rdd: org.apache.spark.rdd.RDD[String] = examples/src/main/resources/people.txt MapPartitionsRDD[1] at textFile at <console>:24 scala> rdd.map{x => val pa = x.split(","); (pa(0).trim,pa(1).trim)}.toDF("c", "age") res0: org.apache.spark.sql.DataFrame = [name: string, age: string] scala> val df = res0 df: org.apache.spark.sql.DataFrame = [name: string, age: string] scala> df.rdd res2: org.apache.spark.rdd.RDD[org.apache.spark.sql.Row] = MapPartitionsRDD[7] at rdd at <console>:31 scala> res2.map(_.getString(1)).collect res4: Array[String] = Array(29, 30, 19) scala> res2.map(_.getString(0)).collect res5: Array[String] = Array(Michael, Andy, Justin) scala> res2.map(_.getAs[String](0)) res7: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[12] at map at <console>:33 scala> res2.map(_.getAs[String](0)).collect res8: Array[String] = Array(Michael, Andy, Justin) scala> val df = res0 df: org.apache.spark.sql.DataFrame = [name: string, age: string] scala> df.as[People] res16: org.apache.spark.sql.Dataset[People] = [name: string, age: string] --DS -> RDD/DF --RDD DS.rdd //获取值,编译期校验类型 DS.toDF scala> val rdd = sc.textFile("examples/src/main/resources/people.txt") rdd: org.apache.spark.rdd.RDD[String] = examples/src/main/resources/people.txt MapPartitionsRDD[1] at textFile at <console>:24 scala> case class People(name:String,age:String) defined class People scala> rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)}.toDS res1: org.apache.spark.sql.Dataset[People] = [name: string, age: string] scala> val ds = res1 ds: org.apache.spark.sql.Dataset[People] = [name: string, age: string] scala> ds.rdd res3: org.apache.spark.rdd.RDD[People] = MapPartitionsRDD[9] at rdd at <console>:33 scala> res3.map(_.name) res10: org.apache.spark.rdd.RDD[String] = MapPartitionsRDD[14] at map at <console>:35 scala> res3.map(_.name).collect res11: Array[String] = Array(Michael, Andy, Justin) scala> ds.toDF res17: org.apache.spark.sql.DataFrame = [name: string, age: string]

2.6 IDEA 创建 SparkSQL 程序

IDEA 中程序的打包和运行方式都和 SparkCore 类似,Maven 依赖中需要添加新的依赖项:

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.1.1</version>

</dependency>

程序如下:

package com.lxl.sparksql

import org.apache.spark.sql.SparkSession import org.apache.spark.{SparkConf, SparkContext} import org.slf4j.LoggerFactory

object HelloWorld { def main(args: Array[String]) {

//创建 SparkConf()并设置 App 名称 val spark = SparkSession .builder() .appName("Spark SQL basic example") //.config("spark.some.config.option", "some-value") .getOrCreate()

//导入隐式转换 import spark.implicits._

//读取本地文件,创建 DataFrame val df = spark.read.json("examples/src/main/resources/people.json")

//打印 df.show()

//DSL 风格:查询年龄在 21 岁以上的 df.filter($"age" > 21).show()

//创建临时表 df.createOrReplaceTempView("persons")

//SQL 风格:查询年龄在 21 岁以上的 spark.sql("SELECT * FROM persons where age > 21").show()

//关闭连接 spark.stop() } }

测试:

import org.apache.spark.{SparkConf, SparkContext} import org.apache.spark.sql.SparkSession object HelloWorld { def main(args: Array[String]): Unit = { //获取sparkConf val conf = new SparkConf().setAppName("HelloWorld").setMaster("local[*]") //创建sparkcontext对象 val sc = new SparkContext(conf) //获取sparkSession // val spark = new SparkSession(sc) val spark = SparkSession.builder().config(conf).getOrCreate() //生成DataFrame val df = spark.read.json("D:\Data\Spark\课堂\people.json") //展示所有数据 df.show() //DSL df.select("name").show() //SQL df.createTempView("people") spark.sql("select * from people").show() //关闭资源 spark.close() sc.stop() } }

结果:

+----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+ +-------+ | name| +-------+ |Michael| | Andy| | Justin| +-------+ +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+

2.7 用户自定义函数

在 Shell 窗口中可以通过 spark.udf 功能用户可以自定义函数。

2.7.1 用户自定义 UDF 函数

scala> val df = spark.read.json("examples/src/main/resources/people.json") df: org.apache.spark.sql.DataFrame = [age: bigint, name: string] scala> df.show() +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+ scala> spark.udf.register("addName", (x:String)=> "Name:"+x) res5: org.apache.spark.sql.expressions.UserDefinedFunction = UserDefinedFunction(,StringType,Some(List(StringType)))

scala> df.createOrReplaceTempView("people")

scala> spark.sql("Select addName(name), age from people").show() +-----------------+----+ |UDF:addName(name)| age| +-----------------+----+ | Name:Michael|null| | Name:Andy| 30| | Name:Justin| 19| +-----------------+----+

scala> val rdd = sc.textFile("examples/src/main/resources/people.txt") rdd: org.apache.spark.rdd.RDD[String] = examples/src/main/resources/people.txt MapPartitionsRDD[18] at textFile at <console>:30 scala> rdd.collect res20: Array[String] = Array(Michael, 29, Andy, 30, Justin, 19) scala> rdd.map{x => val pa = x.split(","); People(pa(0).trim,pa(1).trim)}.toDS res21: org.apache.spark.sql.Dataset[People] = [name: string, age: string] scala> rdd.map{x => val pa = x.split(","); (pa(0).trim,pa(1).trim)}.toDS res22: org.apache.spark.sql.Dataset[(String, String)] = [_1: string, _2: string] scala> val df = spark.read.json("examples/src/main/resources/people.json") df: org.apache.spark.sql.DataFrame = [age: bigint, name: string] scala> df.createTempView("people") scala> spark.sql("select * from people").show +----+-------+ | age| name| +----+-------+ |null|Michael| | 30| Andy| | 19| Justin| +----+-------+ scala> spark.udf.register("addName",(x:String) => "name:" + x) res25: org.apache.spark.sql.expressions.UserDefinedFunction = UserDefinedFunction(<function1>,StringType,Some(List(StringType))) scala> spark.sql("select addName(name) as name from people").show +------------+ | name| +------------+ |name:Michael| | name:Andy| | name:Justin| +------------+

2.7.2 用户自定义聚合函数

强类型的 Dataset 和弱类型的 DataFrame 都提供了相关的聚合函数,如 count(),countDistinct(),

avg(),max(),min()。除此之外,用户可以设定自己的自定义聚合函数。

弱类型用户自定义聚合函数:通过继承 UserDefinedAggregateFunction 来实现用户自定义聚

合函数。下面展示一个求平均工资的自定义聚合函数。

import org.apache.spark.sql.expressions.MutableAggregationBuffer import org.apache.spark.sql.expressions.UserDefinedAggregateFunction import org.apache.spark.sql.types._ import org.apache.spark.sql.Row import org.apache.spark.sql.SparkSession

object MyAverage extends UserDefinedAggregateFunction {

// 聚合函数输入参数的数据类型 def inputSchema: StructType = StructType(StructField("inputColumn", LongType) :: Nil)

// 聚合缓冲区中值得数据类型 def bufferSchema: StructType = { StructType(StructField("sum", LongType) :: StructField("count", LongType) :: Nil) }

// 返回值的数据类型 def dataType: DataType = DoubleType

// 对于相同的输入是否一直返回相同的输出。 def deterministic: Boolean = true

// 初始化 def initialize(buffer: MutableAggregationBuffer): Unit = { // 存工资的总额 buffer(0) = 0L

// 存工资的个数 buffer(1) = 0L }

// 相同 Execute 间的数据合并。 def update(buffer: MutableAggregationBuffer, input: Row): Unit = { if (!input.isNullAt(0)) { buffer(0) = buffer.getLong(0) + input.getLong(0) buffer(1) = buffer.getLong(1) + 1 } }

// 不同 Execute 间的数据合并 def merge(buffer1: MutableAggregationBuffer, buffer2: Row): Unit = { buffer1(0) = buffer1.getLong(0) + buffer2.getLong(0) buffer1(1) = buffer1.getLong(1) + buffer2.getLong(1) }

// 计算最终结果 def evaluate(buffer: Row): Double = buffer.getLong(0).toDouble / buffer.getLong(1) }

// 注册函数 spark.udf.register("myAverage", MyAverage) val df = spark.read.json("examples/src/main/resources/employees.json") df.createOrReplaceTempView("employees") df.show() // +-------+------+ // | name|salary| // +-------+------+ // |Michael| 3000| // | Andy| 4500| // | Justin| 3500| // | Berta| 4000| // +-------+------+

val result = spark.sql("SELECT myAverage(salary) as average_salary FROM employees") result.show() // +--------------+ // |average_salary| // +--------------+ // | 3750.0| // +--------------+

强类型用户自定义聚合函数:通过继承 Aggregator 来实现强类型自定义聚合函数,同样是求

平均工资

import org.apache.spark.sql.expressions.Aggregator import org.apache.spark.sql.Encoder import org.apache.spark.sql.Encoders import org.apache.spark.sql.SparkSession

// 既然是强类型,可能有 case 类 case class Employee(name: String, salary: Long) case class Average(var sum: Long, var count: Long) object MyAverage extends Aggregator[Employee, Average, Double] { // 定义一个数据结构,保存工资总数和工资总个数,初始都为 0 def zero: Average = Average(0L, 0L)

// Combine two values to produce a new value. For performance, the function may modify `buffer`

// and return it instead of constructing a new object def reduce(buffer: Average, employee: Employee): Average = { buffer.sum += employee.salary buffer.count += 1 buffer }

// 聚合不同 execute 的结果 def merge(b1: Average, b2: Average): Average = { b1.sum += b2.sum b1.count += b2.count b1 }

// 计算输出 def finish(reduction: Average): Double = reduction.sum.toDouble / reduction.count

// 设定之间值类型的编码器,要转换成 case 类 // Encoders.product 是进行 scala 元组和 case 类转换的编码器 def bufferEncoder: Encoder[Average] = Encoders.product

// 设定最终输出值的编码器 def outputEncoder: Encoder[Double] = Encoders.scalaDouble }

import spark.implicits._ val ds = spark.read.json("examples/src/main/resources/employees.json").as[Employee] ds.show() // +-------+------+ // | name|salary| // +-------+------+ // |Michael| 3000| // | Andy| 4500| // | Justin| 3500| // | Berta| 4000| // +-------+------+ // Convert the function to a `TypedColumn` and give it a name

val averageSalary = MyAverage.toColumn.name("average_salary") val result = ds.select(averageSalary) result.show() // +--------------+ // |average_salary| // +--------------+ // | 3750.0| // +--------------+

笔记:

数据:

{"name":"Michael", "age":11}

{"name":"Andy", "age":30}

{"name":"Justin", "age":19}

代码:

package com.lxl import org.apache.spark.SparkConf import org.apache.spark.sql.expressions.{MutableAggregationBuffer, UserDefinedAggregateFunction} import org.apache.spark.sql.types._ import org.apache.spark.sql.{Row, SparkSession} class CustomerAvg extends UserDefinedAggregateFunction{ //输入的类型 override def inputSchema: StructType = StructType(StructField("salary", LongType)::Nil) //缓存数据的类型 override def bufferSchema: StructType = StructType(StructField("sum", LongType) :: StructField("count", LongType) :: Nil) //返回值的类型 override def dataType: DataType = DoubleType //幂等性 override def deterministic: Boolean = true //初始化 override def initialize(buffer: MutableAggregationBuffer): Unit = { buffer(0) = 0L buffer(1) = 0L } //更新 override def update(buffer: MutableAggregationBuffer, input: Row): Unit = { buffer(0) = buffer.getLong(0) + input.getLong(0) buffer(1) = buffer.getLong(1) + 1L } //合并 override def merge(buffer1: MutableAggregationBuffer, buffer2: Row): Unit = { buffer1(0) = buffer1.getLong(0) + buffer2.getLong(0) buffer1(1) = buffer1.getLong(1) + buffer2.getLong(1) } //最终执行的方法 override def evaluate(buffer: Row): Double = { buffer.getLong(0) / buffer.getLong(1) } } object CustomerAvg { def main(args: Array[String]): Unit = { //获取配置信息 val conf = new SparkConf().setMaster("local[*]").setAppName("MyAvg") //生成SparkSession对象 val spark = SparkSession.builder().config(conf).getOrCreate() spark.udf.register("MyAvg", new CustomerAvg) val df = spark.read.json("D:\Data\Spark\课堂\people.json") df.createTempView("people") spark.sql("select MyAvg(age) avg_age from people").show() spark.stop() } }

结果:

+-------+ |avg_age| +-------+ | 20.0| +-------+ Process finished with exit code 0