第4章 IDEA环境应用

spark shell仅在测试和验证我们的程序时使用的较多,在生产环境中,通常会在IDE中编制程序,然后打成jar包,然后提交到集群,最常用的是创建一个Maven项目,利用Maven来管理jar包的依赖。

4.1 在IDEA中编写WordCount程序

1)创建一个Maven项目WordCount并导入依赖

<dependencies> <dependency> <groupId>org.apache.spark</groupId> <artifactId>spark-core_2.11</artifactId> <version>2.1.1</version> </dependency> </dependencies> <build> <finalName>WordCount</finalName> <plugins> <plugin> <groupId>net.alchim31.maven</groupId> <artifactId>scala-maven-plugin</artifactId> <version>3.2.2</version> <executions> <execution> <goals> <goal>compile</goal> <goal>testCompile</goal> </goals> </execution> </executions> </plugin> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-assembly-plugin</artifactId> <version>3.0.0</version> <configuration> <archive> <manifest> <mainClass>WordCount(修改)</mainClass> </manifest> </archive> <descriptorRefs> <descriptorRef>jar-with-dependencies</descriptorRef> </descriptorRefs> </configuration> <executions> <execution> <id>make-assembly</id> <phase>package</phase> <goals> <goal>single</goal> </goals> </execution> </executions> </plugin> </plugins> </build>

2)编写代码

package com.atguigu import org.apache.spark.{SparkConf, SparkContext} object WordCount{ def main(args: Array[String]): Unit = { //创建SparkConf并设置App名称 val conf = new SparkConf().setAppName("WC") //创建SparkContext,该对象是提交Spark App的入口 val sc = new SparkContext(conf) //使用sc创建RDD并执行相应的transformation和action sc.textFile(args(0)).flatMap(_.split(" ")).map((_, 1)).reduceByKey(_+_, 1).sortBy(_._2, false).saveAsTextFile(args(1)) sc.stop() } }

3)打包到集群测试

bin/spark-submit --class WordCount --master spark://hadoop102:7077 WordCount.jar /word.txt /out

4.2 本地调试

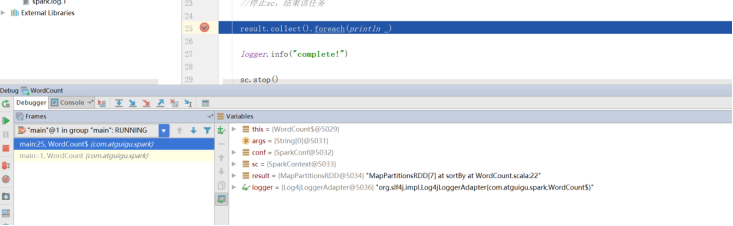

本地Spark程序调试需要使用local提交模式,即将本机当做运行环境,Master和Worker都为本机。运行时直接加断点调试即可。如下:

创建SparkConf的时候设置额外属性,表明本地执行:

val conf = new SparkConf().setAppName("WC").setMaster("local[*]")

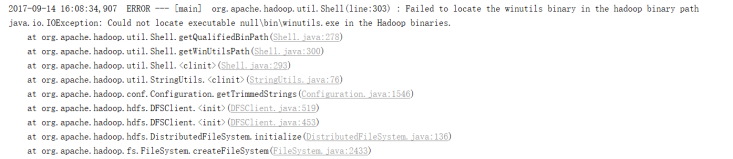

如果本机操作系统是windows,如果在程序中使用了hadoop相关的东西,比如写入文件到HDFS,则会遇到如下异常:

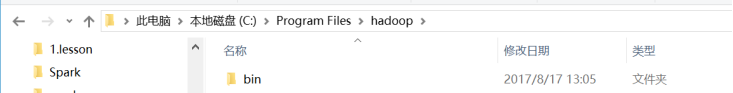

出现这个问题的原因,并不是程序的错误,而是用到了hadoop相关的服务,解决办法是将附加里面的hadoop-common-bin-2.7.3-x64.zip解压到任意目录。

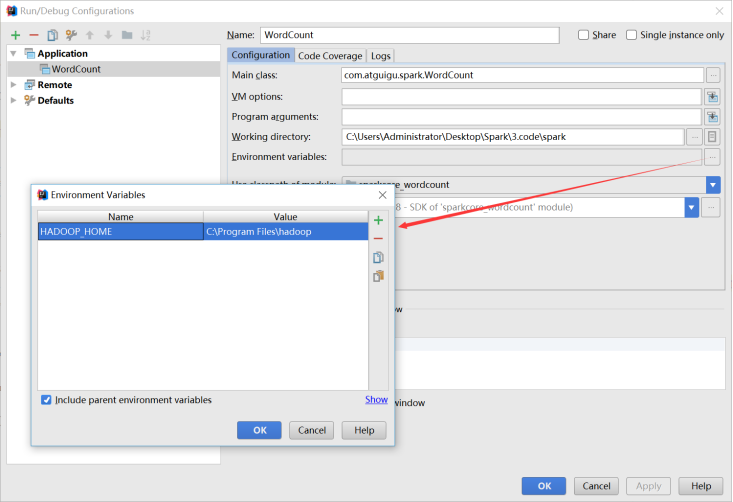

在IDEA中配置Run Configuration,添加HADOOP_HOME变量

4.3 远程调试

通过IDEA进行远程调试,主要是将IDEA作为Driver来提交应用程序,配置过程如下:

修改sparkConf,添加最终需要运行的Jar包、Driver程序的地址,并设置Master的提交地址:

val conf = new SparkConf().setAppName("WC")

.setMaster("spark://hadoop102:7077")

.setJars(List("E:\SparkIDEA\spark_test\target\WordCount.jar"))

然后加入断点,直接调试即可:

本地直接运行

package com.briup.core

import org.apache.spark.{SparkConf, SparkContext}

object WordCount {

def main(args: Array[String]): Unit = {

//1 获取配置SparkConf

// spark-submit master name 在命令行里设置

// 右键运行 master name 代码配置

val conf = new SparkConf()

.setMaster("local[*]")

.setAppName("dzy_wordCount")

//2 SparkContext

val sc= new SparkContext(conf)

//3 RDD

val lines = sc.textFile("file:///opt/software/spark/README.md")

//4 执行操作 flatten

// Mapper ----> shuffle ---->Reducer

val rdd1 = lines.flatMap(_.split(" "))

.groupBy(x => x)

.mapValues(x => x.size)

//* 序列化执行结果

rdd1.foreach(println)

rdd1.saveAsTextFile("file:///home/dengzhiyong/Documents/IDEA_workspace/IdeaProjects/ECJTU_Spack_Ecosphere/Spark/src/main/scala/com/briup/core/result")

//5 关闭sc

sc.stop()

}

}