避免重复访问

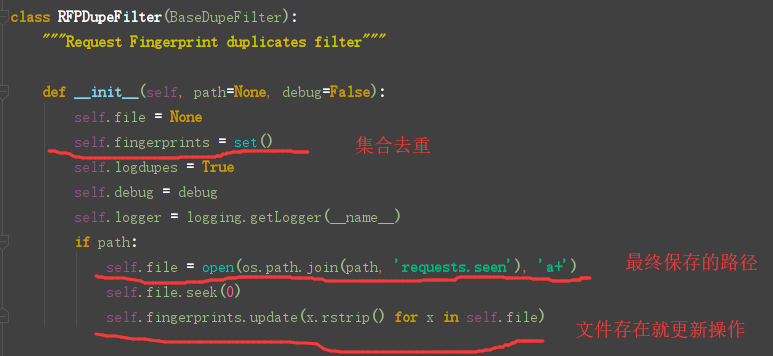

scrapy默认使用 scrapy.dupefilter.RFPDupeFilter 进行去重,相关配置有:

1 DUPEFILTER_CLASS = 'scrapy.dupefilter.RFPDupeFilter' 2 DUPEFILTER_DEBUG = False 3 JOBDIR = "保存记录的日志路径,如:/root/" # 最终路径为 /root/requests.seen

自定义url去重操作

Chouti.py

1 # -*- coding: utf-8 -*- 2 import scrapy 3 from wyb.items import WybItem 4 from scrapy.dupefilters import RFPDupeFilter 5 6 7 class ChoutiSpider(scrapy.Spider): 8 name = 'chouti' 9 # 爬取定向的网页 只允许这个域名的 10 allowed_domains = ['chouti.com'] 11 start_urls = ['https://dig.chouti.com/'] 12 13 def parse(self, response): 14 from scrapy.http.response.html import HtmlResponse 15 print(response.request.url) 16 # print(response, type(response)) 17 # print(response.text) 18 # item_list = response.xpath('//div[@id="content-list"]/div[@class="item"]') 19 # for item in item_list: 20 # text = item.xpath('.//a/text()').extract_first() 21 # href = item.xpath('.//a/@href').extract_first() 22 # yield WybItem(text=text, href=href) 23 page_list = response.xpath('//div[@id="dig_lcpage"]//a/@href').extract() 24 for page in page_list: 25 from scrapy.http import Request 26 page = "https://dig.chouti.com"+page 27 # 继续发请求,回调函数parse 28 yield Request(url=page, callback=self.parse, dont_filter=False) # 遵循去重操作

pipelines.py

1 # -*- coding: utf-8 -*- 2 3 # Define your item pipelines here 4 # 5 # Don't forget to add your pipeline to the ITEM_PIPELINES setting 6 # See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html 7 8 from .settings import HREF_FILE_PATH 9 from scrapy.exceptions import DropItem 10 11 12 class WybPipeline(object): 13 def __init__(self, path): 14 self.f = None 15 self.path = path 16 17 @classmethod 18 def from_crawler(cls, crawler): 19 """ 20 初始化时候,用于创建pipline对象 21 :param crawler: 22 :return: 23 """ 24 path = crawler.settings.get('HREF_FILE_PATH') 25 return cls(path) 26 27 def open_spider(self, spider): 28 """ 29 爬虫开始执行被调用 30 :param spider: 31 :return: 32 """ 33 # if spider.name == "chouti": 34 # self.f = open(HREF_FILE_PATH, 'a+') 35 self.f = open(self.path, 'a+') 36 37 def process_item(self, item, spider): 38 # item就是yield返回的内容 39 # spider就是当前ChoutiSpider类的实例 40 # f = open('news.log', 'a+') 41 # f.write(item['href']) 42 # f.close() 43 self.f.write(item['href']+' ') 44 # return item # 不交给下一个pipeline的process_item去处理 45 raise DropItem() # 后续的 pipeline的process_item不再执行了 46 47 def close_spider(self, spider): 48 """ 49 爬虫关闭时调用 50 :param spider: 51 :return: 52 """ 53 self.f.close() 54 55 56 class DBPipeline(object): 57 def __init__(self, path): 58 self.f = None 59 self.path = path 60 61 @classmethod 62 def from_crawler(cls, crawler): 63 """ 64 初始化时候,用于创建pipline对象 65 :param crawler: 66 :return: 67 """ 68 path = crawler.settings.get('HREF_DB_PATH') 69 return cls(path) 70 71 def open_spider(self, spider): 72 """ 73 爬虫开始执行被调用 74 :param spider: 75 :return: 76 """ 77 # self.f = open(HREF_FILE_PATH, 'a+') 78 self.f = open(self.path, 'a+') 79 80 def process_item(self, item, spider): 81 # item就是yield返回的内容 82 # spider就是当前ChoutiSpider类的实例 83 # f = open('news.log', 'a+') 84 # f.write(item['href']) 85 # f.close() 86 self.f.write(item['href'] + ' ') 87 return item 88 89 def close_spider(self, spider): 90 """ 91 爬虫关闭时调用 92 :param spider: 93 :return: 94 """ 95 self.f.close()

items.py

1 # -*- coding: utf-8 -*- 2 3 # Define here the models for your scraped items 4 # 5 # See documentation in: 6 # https://docs.scrapy.org/en/latest/topics/items.html 7 8 import scrapy 9 10 11 class WybItem(scrapy.Item): 12 # define the fields for your item here like: 13 # name = scrapy.Field() 14 title = scrapy.Field() 15 href = scrapy.Field()

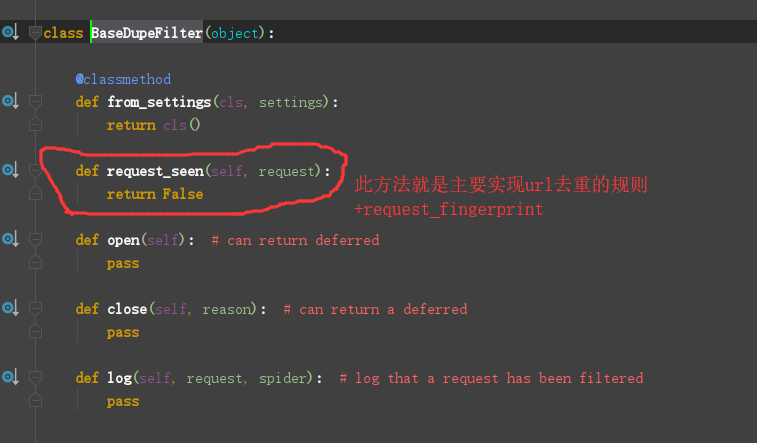

dupefilters.py

1 #!/usr/bin/env python 2 # -*- coding:utf-8 -*- 3 from scrapy.dupefilters import BaseDupeFilter 4 from scrapy.utils.request import request_fingerprint 5 6 7 class WybDuperFilter(BaseDupeFilter): 8 def __init__(self): 9 self.visited_fd = set() 10 11 @classmethod 12 def from_settings(cls, settings): 13 return cls() 14 15 def request_seen(self, request): 16 # 变成url的唯一标识了 17 fd = request_fingerprint(request=request) 18 if fd in self.visited_fd: 19 return True 20 self.visited_fd.add(fd) 21 22 def open(self): # can return deferred 23 print('开始') 24 25 def close(self, reason): # can return a deferred 26 print('结束') 27 28 def log(self, request, spider): # log that a request has been filtered 29 print('日志')

settings.py

1 ITEM_PIPELINES = { 2 # 'wyb.pipelines.WybPipeline': 300, 3 # 'wyb.pipelines.DBPipeline': 301, 4 # 优先级 0-1000 越小优先级越快 5 } 6 7 HREF_FILE_PATH = 'news.log' 8 HREF_DB_PATH = 'db.log' 9 10 # 修改默认的去重规则 11 DUPEFILTER_CLASS = 'wyb.dupefilters.WybDuperFilter' 12 # DUPEFILTER_CLASS = 'scrapy.dupefilters.RFPDupeFilter'

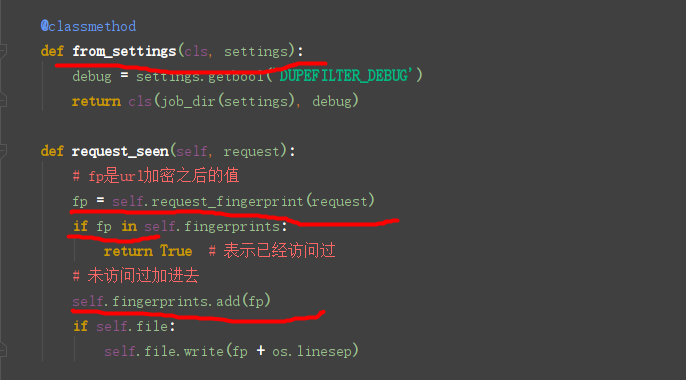

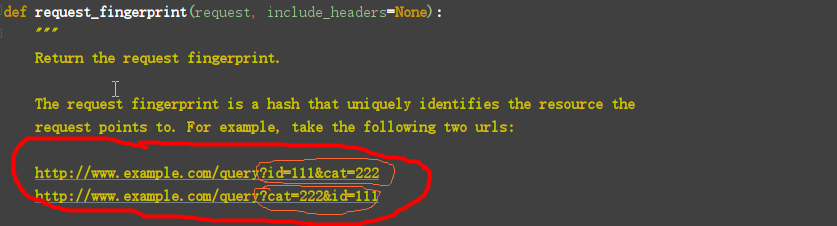

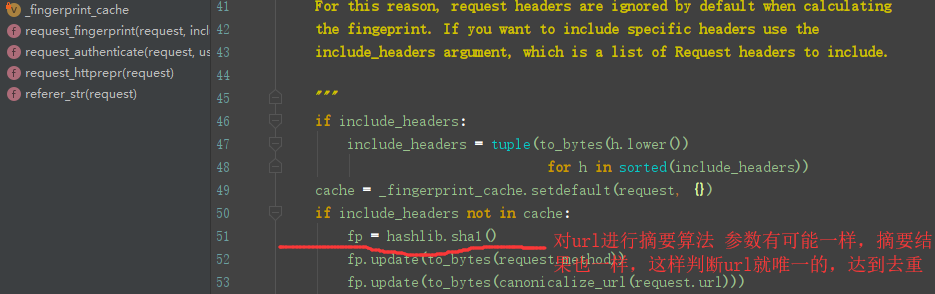

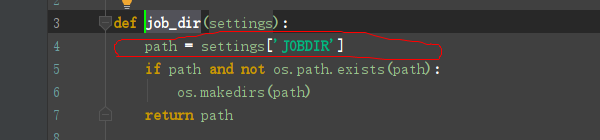

源码流程分析

Scrapy内部默认使用RFPDupeFilter去重

配置文件可以加上此路径

1 JOBDIR = "保存记录的日志路径,如:/root/" # 最终路径为 /root/requests.seen

自定义去重规则的时候

限制深度

1 # -*- coding: utf-8 -*- 2 import scrapy 3 from wyb.items import WybItem 4 from scrapy.dupefilters import RFPDupeFilter 5 6 7 class ChoutiSpider(scrapy.Spider): 8 name = 'chouti' 9 # 爬取定向的网页 只允许这个域名的 10 allowed_domains = ['chouti.com'] 11 start_urls = ['https://dig.chouti.com/'] 12 13 def parse(self, response): 14 from scrapy.http.response.html import HtmlResponse 15 print(response.request.url, response.meta['depth']) 16 # print(response.request.url) 17 # print(response, type(response)) 18 # print(response.text) 19 # item_list = response.xpath('//div[@id="content-list"]/div[@class="item"]') 20 # for item in item_list: 21 # text = item.xpath('.//a/text()').extract_first() 22 # href = item.xpath('.//a/@href').extract_first() 23 # yield WybItem(text=text, href=href) 24 # page_list = response.xpath('//div[@id="dig_lcpage"]//a/@href').extract() 25 # for page in page_list: 26 # from scrapy.http import Request 27 # page = "https://dig.chouti.com"+page 28 # # 继续发请求,回调函数parse 29 # yield Request(url=page, callback=self.parse, dont_filter=False) # 遵循去重操作

配置文件

1 # 限制深度 2 DEPTH_LIMIT = 3