distinct/groupByKey/reduceByKey:

distinct:

import org.apache.spark.SparkContext

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.SparkSession

object TransformationsDemo {

def main(args: Array[String]): Unit = {

val sparkSession = SparkSession.builder().appName("TransformationsDemo").master("local[1]").getOrCreate()

val sc = sparkSession.sparkContext

testDistinct(sc)

}

private def testDistinct(sc: SparkContext) = {

val rdd = sc.makeRDD(Seq("aa", "bb", "cc", "aa", "cc"), 1)

//对RDD中的元素进行去重操作

rdd.distinct(1).collect().foreach(println)

}

}

运行结果:

groupByKey:

import org.apache.spark.SparkContext

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.SparkSession

object TransformationsDemo {

def main(args: Array[String]): Unit = {

val sparkSession = SparkSession.builder().appName("TransformationsDemo").master("local[1]").getOrCreate()

val sc = sparkSession.sparkContext

testGroupByKey(sc)

}

private def testGroupByKey(sc: SparkContext) = {

val rdd: RDD[(String, Int)] = sc.makeRDD(Seq(("aa", 1), ("bb", 1), ("cc", 1), ("aa", 1), ("cc", 1)), 1)

//pair RDD,即RDD的每一行是(key, value),key相同进行聚合

rdd.groupByKey().map(v => (v._1, v._2.sum)).collect().foreach(println)

}

}

运行结果:

reduceByKey:

import org.apache.spark.SparkContext

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.SparkSession

object TransformationsDemo {

def main(args: Array[String]): Unit = {

val sparkSession = SparkSession.builder().appName("TransformationsDemo").master("local[1]").getOrCreate()

val sc = sparkSession.sparkContext

testReduceByKey(sc)

}

private def testReduceByKey(sc: SparkContext) = {

val rdd: RDD[(String, Int)] = sc.makeRDD(Seq(("aa", 1), ("bb", 1), ("cc", 1), ("aa", 1), ("cc", 1)), 1)

//pair RDD,即RDD的每一行是(key, value),key相同进行聚合

rdd.reduceByKey(_+_).collect().foreach(println)

}

}

运行结果:

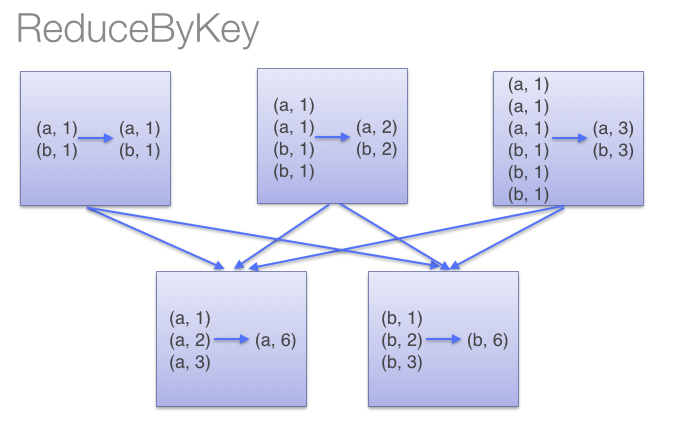

groupByKey与 reduceByKey区别:

reduceByKey用于对每个key对应的多个value进行merge操作,最重要的是它能够在本地先进行merge操作,并且merge操作可以通过函数自定义。groupByKey也是对每个key进行操作,但只生成一个sequence。因为groupByKey不能自定义函数,我们需要先用groupByKey生成RDD,然后才能对此RDD通过map进行自定义函数操作。当调用 groupByKey时,所有的键值对(key-value pair) 都会被移动。在网络上传输这些数据非常没有必要。避免使用 GroupByKey。