出错信息hadoop相关的包找不到。

[root@hadoop01 bin]# ./spark2-shell Exception in thread "main" java.lang.NoClassDefFoundError: org/apache/hadoop/fs/FSDataInputStream at org.apache.spark.deploy.SparkSubmitArguments$$anonfun$mergeDefaultSparkProperties$1.apply(SparkSubmitArguments.scala:124) at org.apache.spark.deploy.SparkSubmitArguments$$anonfun$mergeDefaultSparkProperties$1.apply(SparkSubmitArguments.scala:124) at scala.Option.getOrElse(Option.scala:121) at org.apache.spark.deploy.SparkSubmitArguments.mergeDefaultSparkProperties(SparkSubmitArguments.scala:124) at org.apache.spark.deploy.SparkSubmitArguments.<init>(SparkSubmitArguments.scala:110) at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:119) at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala) Caused by: java.lang.ClassNotFoundException: org.apache.hadoop.fs.FSDataInputStream at java.net.URLClassLoader.findClass(URLClassLoader.java:381) at java.lang.ClassLoader.loadClass(ClassLoader.java:424) at sun.misc.Launcher$AppClassLoader.loadClass(Launcher.java:335) at java.lang.ClassLoader.loadClass(ClassLoader.java:357) ... 7 more [root@hadoop01 bin]#

原因分析:Spark1.4以后,所有spark的编译都是没有将hadoop的classpath编译进去的。所以必须在spark-env.sh中指定hadoop中的所有jar包。

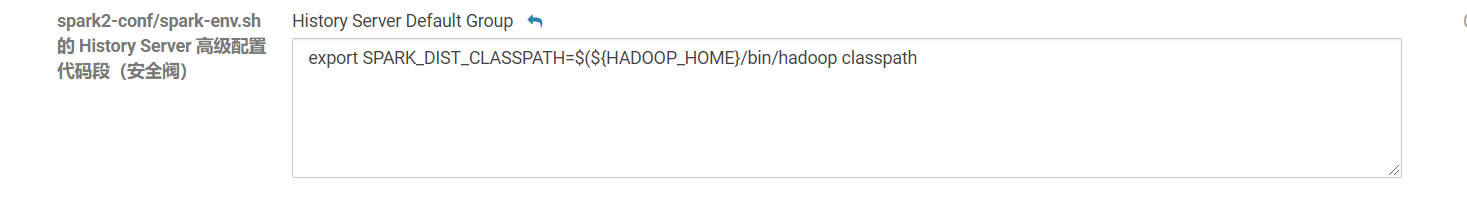

修改配置:在CM网页上修改Spark2.2配置,指定SPARK_DIST_CLASSPATH,然后重启过期配置。

export SPARK_DIST_CLASSPATH=$(${HADOOP_HOME}/bin/hadoop classpath)